Since 2009, Clarivate has been profiling the world’s leading universities and research institutions using an exclusive set of key performance indicators for our Institutional Profiles initiative. These Institutional profiles facilitate a multidimensional and unbiased comparison of all aspects of a university’s performance regardless of the university’s mission, size, geographical location, or subject mix. Combining gold-standard bibliometric information with unique data on reputation, demographics of staff, students and funding creates a 360-degree view of all aspects of an institution’s performance.

Data for Institutional Profiles come from three primary sources: institutional data collection, an academic reputation survey, and bibliometric analysis. Visualizations enable you to track the performance of institutions over time and compare institutions on the basis of a wide range of performance measures.

Please note: In Institutional Profiles, data are updated on an annual basis and years are always equal to actual year minus 2 for both the institutional data collection and the corresponding bibliometric data. For the institutional data, this means the academic year that ended in actual year minus two. However, the survey is from the actual year. So, for the 2022 October update, this will include data provided by the institutions for the academic year 2019-20, bibliometric data from 2020, and reputation survey data from earlier in 2022.

The current Institutional Profiles data was updated in October 2022 and includes data provided by the institutions for the academic year 2019-20, bibliometric data from 2020, and reputation survey data from earlier in 2022.

For the bibliometric indicators, the paper, and citation counts are limited to SCIE, SSCI, and AHCI indexes from Web of Science; paper counts, only include articles and reviews.

Read more information about Global Institutions Profile Project.

For each indicator, instead of the actual value, the number shown is a rescaled cumulative probability score. This score is a number from zero (worst) to 100 (best) which indicates how an institution compares against the distribution of all institutions and effectively represents the percentage of all institutions that perform worse than it on a given indicator. This makes it easier to compare an institution between the different Institutional Profiles indicators.

For example, in 2019, the University of Toronto scored 94.62 on papers per academic and research staff, while they only scored of 34.28 on academic staff per student. One can therefore conclude that their staff/student ratio is below average with only 34.28% of institutions scoring worse, but their size-adjusted research output is in the top decile, well above average.

In contrast, MIT score 75.35 for academic staff per student (better than three-quarters of all other institutions) and 35.17 for papers by academic and research staff. MIT has more staff per student than Toronto, but they produce fewer papers each.

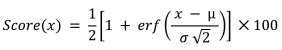

For most indicators in Institutional Profiles, we assume the data are normally distributed. We therefore use the cumulative probability function for the normal distribution to calculate the scores:

Where erf is the error function, µ is the mean of the values included in the dataset, and σ is the standard deviation.

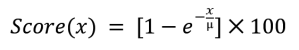

The research and teaching reputation data, however, are more skewed and are assumed to follow an exponential distribution. This has a simpler formula for its cumulative probability function:

Where µ is again the mean.

The subject normalization works similarly to how subject normalization works for publication counts, that is by dividing by a baseline. In this case, however, the baseline is the interquartile mean rather than overall mean for a set of institutions for a given indicator, subject and year to reduce the effect of outliers.

For the overall normalized value, a weighted average of normalized subject values is used, weighting by the raw value for that indicator from the institution. So, for instance, if a given institution only has staff in A&H and Social Sciences, and have twice as many A&H staff as Social Sciences, then we’d use 2/3 their A&H normalized staff count and 1/3 Social Sciences normalized staff count to calculate their overall normalized staff count. This is all done based on the raw data from the institution before the final scores are calculated.

The simplest distinction between academic and research staff is that academic staff will have teaching duties. Typically, academic staff will have a post such as lecturer, reader or professor and are likely to be permanently employees of the institution, while researchers are employed only to perform research, and their posts might be researcher, research fellow or post-doctoral researcher.

Indicators

The proportion of the academic faculty who are international. This is an indicator of the institution’s ability to attract staff from a global environment.

Source: Institutional data collection

Value: The number of international academic staff divided by the number of academic staff.

This is sometimes referred to as a staff-student-ratio and is an indication of the student environment.

Source: Institutional data collection

Value: The number of academic staff divided by the total number of students.

An indication of how successful the institution is at producing doctorates scaled against the number of academic staff.

Source: Institutional data collection

Value: The number of doctoral degrees awarded divided by the number of academic staff and then normalized by subject as described above.

An indication of which end of the education spectrum the institution focuses.

Source: Institutional data collection

Value: The number of doctoral degrees awarded divided by the number of undergraduate degrees awarded.

Institutional income scaled against the numbers of academic staff, to give an indication of how well resourced an institution is regardless of its size.

Source: Institutional data collection

Value: The total institutional income divided by the number of academic staff.

This is a modification of the category normalized citation impact to take into account the country/region where the institution is based. This reflects the fact that some regions will have different publication and citation behavior because of factors such as policy, language and size of the research network.

In the standard IC dataset, CNCI is calculated using baselines based on category, document type and year of publication. The CNCI used in Institutional Profiles, however, only uses the Web of Science category and year of publication; the baseline does not vary by document type in Institutional Profiles.

However, in the standard IC dataset, the CNCI can be calculated for all documents, regardless of their type. For Institutional Profiles, we only use Articles and Reviews when calculating the CNCI.

Source: Bibliometric analysis

Value: This indicator is calculated as the category normalized citation impact of the institution divided by the square root of the category normalized citation impact of the country in which it is based.

The institution’s research output scaled by the number of academic and research staff. As research output is expected to be higher in the sciences than the arts, this is normalized by subject.

Source: Institutional data collection and bibliometric analysis

Value: The number of papers divided by the total number of academic and research staff combined and then normalized by subject as described above.

This is an indicator of the institution’s ability to collaborate in a global environment.

Source: Bibliometric analysis

Value: The proportion of the papers authored by the institution that contains a co-author from a country/region other than the country/region in which the institution is based.

Research income scaled against the academic staff, to give an indication of how well resourced an institution is regardless of its size. It is also an indication of the academic staff’s ability to attract research funding. As research income is expected to be higher in the sciences than the arts, this is normalized by subject.

Source: Institutional data collection

Value: The total research income divided by the number of academic staff and then normalized by subject as described above

Research income from industry scaled against the number of academic staff to give an indication of how successful an institution is at acquiring income from industry regardless of its size.

Source: Institutional data collection

Value: The institution’s research income from industry divided by the number of academic staff.

Results of the academic reputation survey for research globally.

Source: Academic reputation survey

Value: The percentage of all institutions that received fewer votes than the selected institution.

The proportion of the students who are international, irrespective of their level of study. This is an indicator of the institution’s ability to attract students from a global environment.

Source: Institutional data collection

Value: The number of international students divided by the number of students.

Results of the academic reputation survey for teaching globally.

Source: Academic reputation survey

Value: The percentage of all institutions that received fewer votes than the selected institution.